Sanctions screening systems are drowning in false positives - up to 95% of alerts are harmless. This inefficiency costs compliance teams time and money, with manual investigations taking up to 20 minutes per alert and global costs reaching $2.6 billion annually. AI is changing the game by reducing false positives by 92%, slashing review times to 30 seconds, and improving precision to 100%.

Here’s how AI achieves this:

Advanced Name Matching: NLP and large language models (LLMs) analyze name variations and reduce irrelevant matches.

Risk Scoring: Machine learning assigns probabilities instead of relying on rigid rules, cutting unnecessary alerts by 30-60%.

Explainable AI (XAI): Systems now provide clear, detailed explanations for decisions, meeting regulatory requirements.

Contextual Data Use: Incorporating identifiers like birth dates and addresses improves accuracy.

AI Agents: Alerts are ranked by risk, allowing teams to focus on critical cases.

AI-driven systems save time, reduce costs, and ensure compliance, making them essential for modern sanctions screening for stablecoin payments and traditional finance.

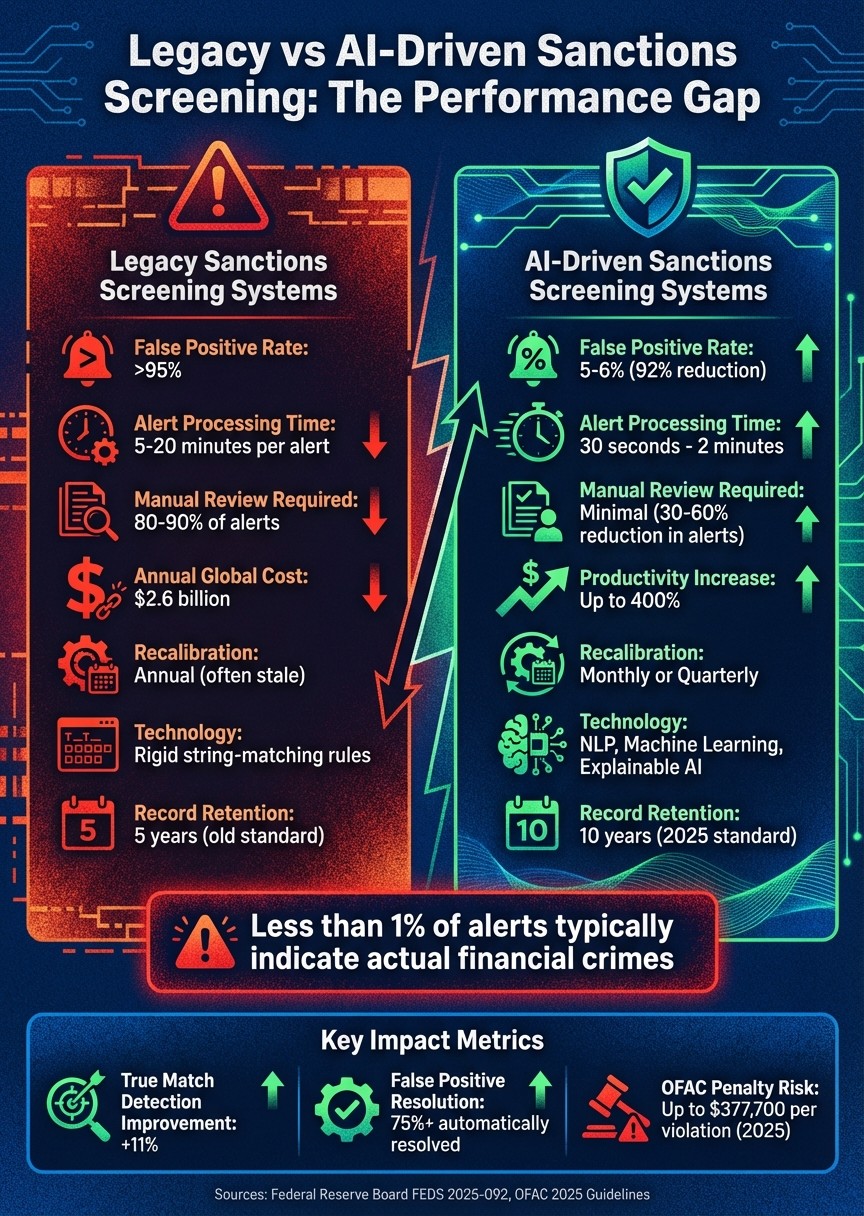

Legacy vs AI-Driven Sanctions Screening: Performance Comparison

The New Standard in Screening Accuracy: Explainable AI for AML & Sanctions (webinar)

What Are False Positives in Sanctions Screening

A false positive happens when a screening system flags a legitimate customer or transaction as a match against a sanctions list - like OFAC, UN, or EU - even though there’s no actual connection. These alerts often arise from minor issues, such as similar names, initials, or slight spelling variations, making it hard to separate genuine threats from harmless cases. Recognizing these false positives is key to designing AI strategies that can cut down inefficiencies.

The main reasons behind false positives are structural. Similar names and matching initials are major triggers, especially with common names that generate a flood of alerts. Other factors include transliteration problems (e.g., converting Arabic or Cyrillic names into Latin script), incomplete customer data (like missing birth dates or passport numbers), and outdated technology that relies on rigid string-matching rules. To ensure no real threats are missed, institutions often widen their matching criteria, which only increases the number of false alerts. These built-in limitations create a heavy operational workload.

The operational impact is massive. Compliance teams end up manually reviewing 80% to 90% of these alerts. Each investigation can take 15 to 25 minutes, meaning a mid-sized exporter handling 200 screenings per day might spend 2.5 to 5 hours daily just ruling out harmless matches. As one MLRO from LatentBridge Insights explained, "Our issue isn't missing risk. Our issue is surviving the volume".

The financial and regulatory stakes are also high. Globally, processing false positives costs $2.6 billion annually. On top of that, OFAC penalties for sanctions violations can reach up to $377,700 per violation as of January 2025. High volumes of alerts can lead to rushed decisions and poor documentation, which regulators closely scrutinize. For example, in September 2025, freight forwarder Fracht settled with OFAC after failing to properly disclose issues during a surge in alerts, leading to a contract with a sanctioned Venezuelan airline.

Alert fatigue adds another layer of risk. When analysts are overwhelmed by thousands of irrelevant alerts, real threats can slip through the cracks. In fact, less than 1% of alerts typically point to actual financial crimes. Yet, every false positive still demands documented evidence to comply with regulatory standards. This highlights the importance of advanced AI tools that can better separate true risks from false alarms.

AI Techniques That Reduce False Positives

Artificial intelligence is reshaping the way institutions manage the overwhelming number of false alerts. By integrating contextual data, historical patterns, and clear explanations, these advanced methods go far beyond basic string matching.

Fuzzy Matching and NLP for Name Variations

Modern natural language processing (NLP) algorithms tackle the tricky issue of name variations by analyzing the meaning behind words. For example, the logic-v2 matcher identifies and annotates name tokens with "symbols" - recognizing whether "LLC" signifies an organization type or "John" refers to a person - before making comparisons. This level of contextual understanding allows the system to ignore generic terms and focus on the unique elements of a name.

This method has shown impressive results. In November 2025, a Federal Reserve Board working paper (FEDS 2025-092) by Jeffrey S. Allen and Max S.S. Hatfield analyzed large language models (LLMs) from Anthropic, Meta (Llama), Mistral AI, and Amazon (Nova). The study revealed that these LLMs reduced false positives by about 92% compared to traditional fuzzy matching approaches while improving true-match detection by approximately 11%. The authors highlighted:

The LLMs delivered a much clearer separation: very low scores for innocents; very high for actual hits.

However, LLMs can be significantly slower - up to 10,000 times - than traditional algorithms. To address this, institutions use a cascade architecture. A fast, traditional fuzzy screen handles initial matches, while ambiguous cases are escalated to LLMs for more precise analysis. This system strikes a balance between speed and accuracy. Building on these advancements, machine learning has introduced probability-based risk scoring.

Machine Learning Models for Risk Scoring

Machine learning brings a new level of sophistication to sanctions screening by shifting from rigid rules to probability-based assessments. Instead of treating all character swaps equally, these models evaluate factors like geographic data, known aliases, language patterns, and behavioral history. This approach assigns risk scores that better reflect the actual likelihood of a match. Supervised models learn from past compliance decisions, while unsupervised learning identifies new risk patterns that might otherwise be missed.

Institutions using contextual AI filters often see a 30% to 60% drop in non-productive alerts without compromising their controls.

The success of machine learning depends heavily on clean, structured data. For instance, separating first and last names into distinct fields reduces ambiguity. Regular recalibrations - done quarterly or monthly - are also essential to adjust match thresholds in response to updates in sanctions lists and evolving evasion tactics. Alongside these models, explainable AI (XAI) plays a key role in ensuring transparency.

Explainable AI for Transparency

Explainable AI enhances statistical models by providing detailed, plain-language explanations for every alert. Instead of just offering a similarity score, XAI breaks down the reasoning behind decisions. For example, it might explain: "First names match, last names are a fuzzy 0.67 match" or "Entities both linked to country X". This clarity is vital for regulatory compliance, especially since OFAC extended record-keeping requirements to 10 years as of March 2025.

Rule-based models ensure consistent results, avoiding the pitfalls of opaque calculations. As OpenSanctions noted:

A herd of 384-dimensional vectors told me so is also an awkward explanation to share with a regulator who demands to understand a screening system.

By incorporating additional data like geography, nationality, and connections between entities, XAI can distinguish between matches that are technically correct but operationally irrelevant, easing the workload for compliance teams.

Pelican AI showcased its "Self-Learning Optimization" technology for a major financial institution, successfully resolving over 75% of false positives. This was achieved through a combination of NLP and knowledge-based systems, offering clear explanations for each decision. This audit-ready setup provides detailed logs and traceable workflows, ensuring institutions meet regulatory expectations in an era of increased scrutiny.

Contextual Data and Risk Prioritization

Incorporating additional data points like dates of birth, addresses, and transaction patterns significantly improves the accuracy of sanctions screening. By layering these identifiers, institutions can better distinguish between genuine threats and legitimate cases. This approach moves away from simple string matching and embraces multi-factor analysis, allowing compliance teams to zero in on real risks.

Using Secondary Data for Better Screening

Secondary identifiers, such as passport numbers, tax IDs, and dates of birth, play a key role in refining screening processes. This method, called entity resolution, uses multiple criteria to differentiate individuals with similar names before escalating alerts. For instance, if a system flags a name match but finds the date of birth or address doesn’t align, it can automatically dismiss the alert.

Batch screening that incorporates full data sets, including DOBs and addresses, achieves a false positive rate of 5%, compared to approximately 6% in real-time screening. While the difference might seem minor, at scale, it means thousands of unnecessary alerts avoided each month.

Real-world examples underscore the impact of this approach. One global logistics company saw a 60% immediate drop in denied party screening alerts requiring manual review. Another organization reduced its daily manual review queue from over 50,000 alerts to between 20,000 and 25,000 by leveraging AI-driven enhancements.

For corporate entities, data like Ultimate Beneficial Ownership (UBO) chains and entity structure information is essential for enforcing the "50 Percent Rule." This rule identifies risks tied to entities partially owned by sanctioned parties. Without this added context, screening systems risk overlooking indirect exposures, which could lead to regulatory penalties as high as $377,700 per violation starting January 2025.

These refined matching techniques lay the groundwork for AI agents to further prioritize risks effectively.

AI Agents for Risk-Based Prioritization

Building on the advancements in entity resolution, AI agents now rank alerts by risk level, making the review process even more efficient. When combined with prior natural language processing (NLP) and risk scoring methods, these agents create a dynamic and effective screening system. They don’t just flag potential matches - they rank them based on risk factors like geography, nationality, entity relationships, and historical patterns. This helps teams focus on the most critical cases first.

One whitepaper highlighted how AI agents achieved 100% precision in approved onboardings, slashing manual sanctions review times from 5–20 minutes per alert to just 30 seconds. This was accomplished by automatically cross-referencing KYC documents and transaction data. These systems also streamline evidence gathering by pulling together information from KYC data, past reviews, and transaction histories, saving analysts significant time.

Risk-based calibration is becoming the new norm. Instead of applying the same thresholds across all customers, institutions now adjust screening sensitivity based on customer risk levels and geographic exposure. High-risk jurisdictions or unfamiliar counterparties face stricter thresholds, while long-standing, low-risk relationships experience fewer hurdles. This ensures compliance teams allocate their efforts where they’re needed most.

Governance and Performance Metrics

AI-powered sanctions screening thrives on strong governance and measurable performance metrics. Without these, compliance teams risk creating systems that fail regulatory scrutiny. Clear structures and metrics are essential to ensure AI reduces false positives instead of introducing new risks.

With record retention now stretching to ten years, every AI decision made today needs to be defensible a decade from now. A solid governance framework is critical - it can mean the difference between shielding your organization from risk or facing penalties as high as $377,700 per violation.

Policy-Driven Governance Frameworks

A strong governance framework begins with the three lines of defense:

Business units that own the risk

Compliance and risk teams that monitor it

Independent internal audit functions

This structure avoids siloed operations and ensures board oversight to allocate resources for effective sanctions programs.

Transparency in AI models is no longer optional. Regulators, including those under the EU AI Act, require that models offer clear explanations and avoid unintended bias. Instead of issuing opaque decisions, systems must provide plain-English justifications. For instance, instead of flagging a name match without context, the system should clarify: "First names match, but last names are a fuzzy 0.67 match; DOB mismatch confirmed via passport".

Ambiguous cases must follow defined escalation procedures. Every cleared alert needs thorough documentation, including the data reviewed, the analyst's name, and a timestamp.

Regular independent testing and validation ensure AI systems perform as intended. Recalibration is equally important, especially with sanctions lists updating three to four times weekly. Monthly or quarterly threshold adjustments are necessary to maintain accuracy.

Key Metrics for Measuring Performance

To gauge whether an AI system is effective, organizations need the right metrics. The false positive rate (FPR) is a cornerstone for measuring accuracy. While older systems often exceed 95% false positives, well-tuned AI systems can lower this to 5%–6%.

Alert processing time is another critical measure of efficiency. Investigating a single false positive manually can take 5 to 20 minutes. AI-assisted systems, however, can bring this down to under 2 minutes - some even resolve alerts in as little as 30 seconds. This time-saving shift allows compliance teams to focus on more critical tasks.

Metrics like precision and recall help balance the risk of missing true positives with the burden of false alarms. Monitoring backlog trends ensures alert volumes remain manageable, while quality assurance error rates measure review accuracy. Productivity metrics, such as reports of up to a 400% increase in efficiency with AI, highlight the operational advantages of reducing manual work.

Key Performance Indicator | Legacy System Benchmark | AI-Driven Benchmark |

|---|---|---|

False Positive Rate | >95% | 5% - 6% |

Alert Processing Time | 5 - 20 minutes | < 2 minutes |

Recalibration Frequency | Annual (often stale) | Monthly or Quarterly |

Record Retention | 5 Years (Old Standard) | 10 Years (New 2025 Standard) |

These metrics are shaping the future of sanctions screening, making AI-driven systems a vital tool for compliance teams.

Stablerail's AI-Driven Sanctions Screening Approach

Stablerail takes a proactive stance on sanctions screening by integrating AI at the moment of transaction decision - before any stablecoin payment is executed. Through mandatory AI-driven pre-sign checks, the platform ensures secure business decisions while keeping finance teams in the driver's seat. This hybrid approach, where AI handles the heavy compliance work, avoids full automation and ensures human oversight remains intact.

Every payment intent undergoes rigorous pre-sign checks, including sanctions screening, taint analysis, anomaly detection, and counterparty risk scoring. These checks culminate in a Risk Dossier that delivers one of three outcomes: PASS, FLAG, or BLOCK. To put this into perspective, traditional screening tools often suffer from false-positive rates exceeding 95%. By incorporating contextual AI filters, institutions have achieved reductions in false positives by 30% to 60%. Stablerail’s system builds on this with a suite of specialized pre-sign agents designed for thorough compliance checks.

Pre-Sign Agents for Compliance

Stablerail's pre-sign agents ensure every transaction is vetted before reaching the signing stage. These agents match wallet addresses and entities against global sanctions lists, including those maintained by OFAC, UK OFSI, and the EU.

The platform's taint analysis goes beyond basic list matching by examining transaction histories and fund flows. This helps identify indirect exposure to illicit actors - a gap often missed by traditional systems. Additionally, counterparty risk scoring flags Politically Exposed Persons (PEPs) and entities requiring Enhanced Due Diligence (EDD), adding another layer of scrutiny.

Stablerail Pre-Sign Checks | Function | Benefit |

|---|---|---|

Sanctions Screening | Matches against global lists (OFAC, UK OFSI, EU) | Prevents transfers to prohibited entities |

Taint Analysis | Evaluates transaction history and fund flow | Detects indirect links to illicit actors |

Anomaly Detection | Monitors behavioral patterns and timing | Flags suspicious activities like structuring |

Risk Dossier | Compiles identity and contextual data | Provides clear "PASS/FLAG/BLOCK" outcomes |

Audit Trail | Logs timestamps and off-chain identifiers | Supports regulatory reporting and defense |

Anomaly Detection for Risk Mitigation

Anomaly detection plays a key role in reducing risks by spotting irregularities in transaction behavior. The system evaluates payment patterns, timing, and amounts against established norms. For example, transactions at odd hours, amounts structured to avoid detection, or deviations from usual payout patterns are flagged for review. This is especially critical as geopolitical changes lead to rapidly growing watchlists that overwhelm manual review processes, causing alert fatigue in older systems. Stablerail’s assistive model ensures AI flags potential risks and provides context, while human approvers retain the final say on high-risk decisions.

Plain-English Explanations and Audit Trails

Every screening decision comes with a plain-English explanation, detailing the evidence behind it. Whether it’s a sanctions list match, suspicious transaction pattern, or a counterparty's risk score, approvers receive clear reasoning instead of cryptic verdicts.

Stablerail also maintains a comprehensive audit trail for every action - covering intent creation, checks performed, flags raised, overrides applied, approvals granted, and transactions signed. With the OFAC record-keeping requirement extended to ten years starting March 2025, this level of documentation is essential for defending decisions to auditors, boards, and regulators. By linking on-chain transactions to off-chain identifiers, Stablerail provides a defensible record that meets evolving compliance standards while supporting post-event analysis to fine-tune processes and reduce false positives further.

The Future of AI in Sanctions Screening

AI's role in sanctions screening is evolving rapidly, promising smarter filtering, quicker decisions, and improved oversight. Traditional systems often struggled with excessive false positives, but newer AI solutions are delivering dramatic improvements. Some platforms have reported reductions in false positives ranging from 20% to 60%, with specialized models achieving up to a 94% decrease . With global sanctions lists expanding to approximately 79,830 designated individuals by March 2025 - a 17.1% annual growth rate - the urgency for intelligent, automated solutions has never been greater. These advancements signal a transformative shift in how sanctions screening is conducted.

Next-generation AI systems are moving beyond basic string matching to adopt symbolic matching, which categorizes specific name components before applying fuzzy logic. For instance, recognizing "LLC" as a type of organization or "John" as a personal name allows these systems to deprioritize generic terms like "Corporation" or "Industries" . In September 2025, OpenSanctions introduced the logic-v2 matcher, which utilizes a taxonomy comprising over 161,000 items and 1.16 million spellings sourced from Wikidata. This enables deterministic and explainable name matching. Addressing regulatory expectations, OpenSanctions emphasized the importance of transparency:

A herd of 384-dimensional vectors told me so is also an awkward explanation to share with a regulator who demands to understand a screening system.

This transparent and logical approach paves the way for more accurate and efficient real-time monitoring.

Real-time monitoring is increasingly replacing periodic batch screening, especially as sanctions lists are updated several times a week . By early 2025, AI agents had reduced review times to just 30 seconds. These advancements in real-time monitoring, combined with enhanced risk prioritization, streamline compliance processes significantly. For instance, institutions using AI-integrated systems have reported a 24% drop in false-positive transaction alerts and a 31% improvement in converting cases into SARs (Suspicious Activity Reports). Additionally, hybrid approaches leveraging natural language processing (NLP) and machine learning have boosted compliance productivity by an impressive 400%.

Alongside these technical advancements, governance frameworks are also advancing. Explainable AI (XAI) is now central to these frameworks, moving away from opaque deep-learning models to systems that provide plain-language explanations that satisfy regulatory requirements . The ability to defend screening decisions with clear, auditable reasoning is becoming a key differentiator. LatentBridge highlighted this shift:

In 2026, institutions will differentiate not by reviewing more alerts but by reviewing the right ones.

FAQs

How does AI cut sanctions-screening false positives without missing real matches?

AI has made sanctions screening more precise by significantly reducing false positives. By improving how names and entities are matched, it minimizes the number of harmless alerts mistakenly flagged as violations. Recent studies highlight that large language models (LLMs) can reduce false positives by as much as 92% while increasing true match detection by 11%. These systems use natural language processing (NLP) to tackle the challenges of complex name variations, address formats, and cultural nuances, delivering greater accuracy without missing legitimate matches.

What data beyond names can help reduce false positives?

Using more than just names can drastically cut down on false positives. By integrating behavioral and contextual data, like transaction patterns, counterparty risk scores, and unusual behaviors, you can better identify genuine risks. Key elements to consider include:

Time-of-day activity: Does the timing of the transaction align with typical behavior?

Amounts versus baseline: Are the amounts consistent with historical patterns?

Payout patterns: Do the disbursements follow expected trends?

Adding policy enforcement and narrative explanations - backed by solid evidence - further sharpens the ability to separate real threats from harmless matches. Plus, tools like real-time monitoring and blockchain analytics provide deeper insights, making it easier to stay accurate and compliant.

How can we prove AI screening decisions to regulators years later?

Keeping a thorough audit trail allows us to explain AI screening decisions to regulators, even years later. This means documenting everything: the intent behind decisions, checks conducted, flags raised, overrides made, approvals granted, and sign-offs - all paired with detailed, evidence-backed explanations. Such records are key to maintaining both transparency and accountability over time.

Related Blog Posts

Ready to modernize your treasury security?

Latest posts

Explore more product news and best practices for using Stablerail.